Selected Projects

Scroll down to explore our projects.

Score-Based Density Estimation from Pairwise Comparisons

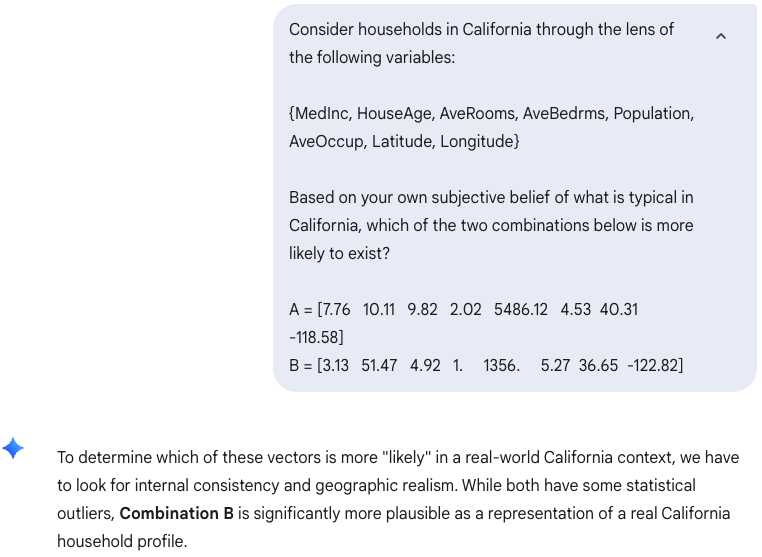

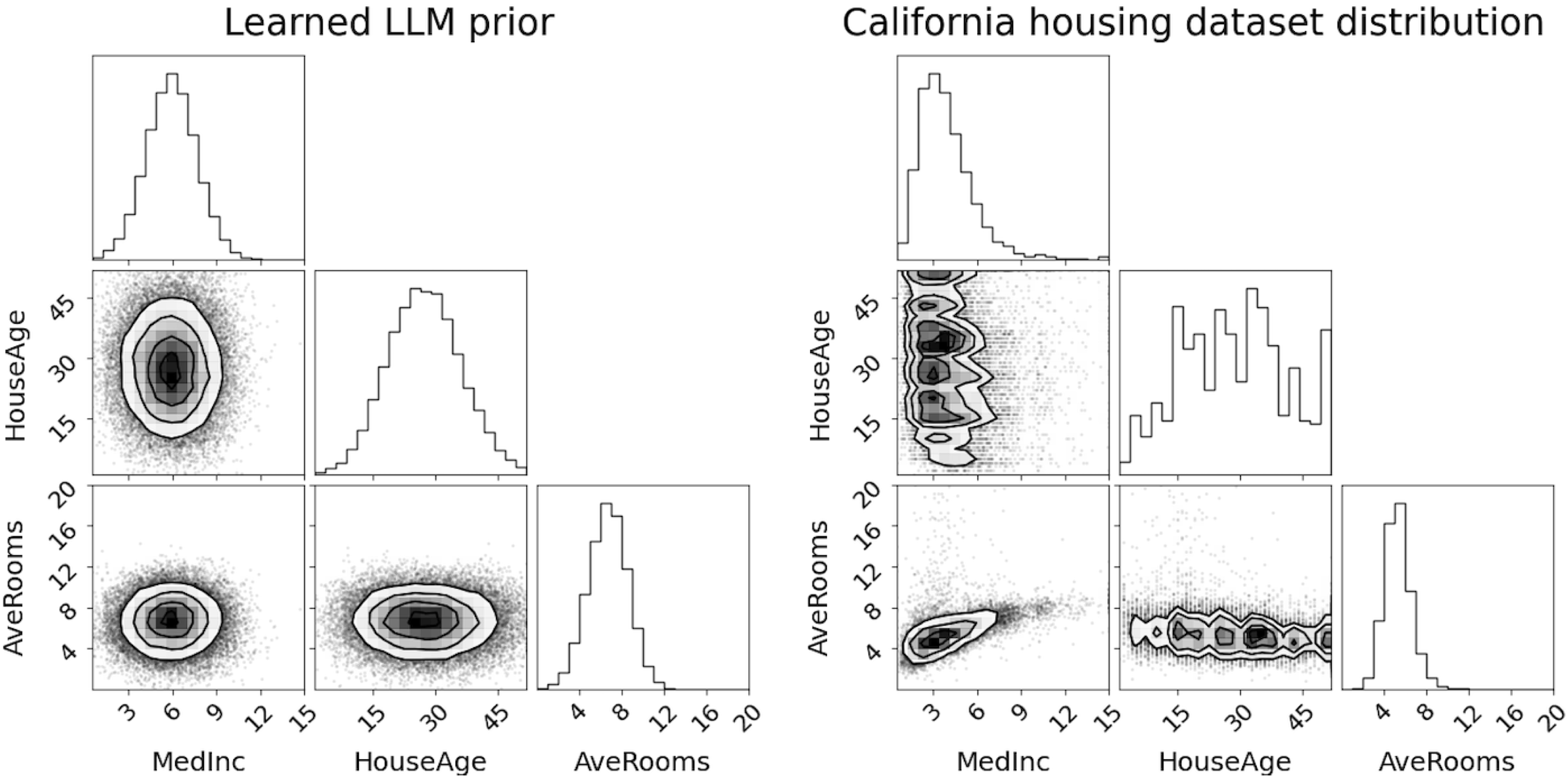

We consider the problem of learning a probability density — representing an underlying belief (of a human or an LLM) — solely from pairwise comparisons. For score-based methods such as diffusion models, we show a theoretical result that allows the problem to be cast as score-based density estimation rather than MLE. This enables the density to be learned as a diffusion model, bridging the gap between preference learning and generative modeling. Some applications:

- Prior elicitation: joint prior distribution from expert pairwise comparisons

- Reward modeling: probabilistic reward model from user pairwise comparisons

- LLM elicitation: LLM's belief by prompting pairwise comparisons

Prompt LLM with simple comparative questions

Diffusion model trained on comparisons — reflecting LLM's belief — resembles the true data distribution

Preferential Normalizing Flows

We show how to learn a probability density as a normalizing flow from preference data. Some applications:

- Prior elicitation: joint prior distribution from expert rankings or comparisons

- Reward modeling: probabilistic reward model from user rankings or comparisons

- LLM elicitation: LLM's belief by prompting ranking or comparisons

Easy integration with normflows

Projective Preferential Bayesian Optimization

We studied a new type of Bayesian optimization for learning user preferences or knowledge in high-dimensional spaces. The user provides input by selecting the preferred choice using a slider. The method is able to find the minimum of a high-dimensional black-box function, a task that is often infeasible for existing preferential Bayesian optimization frameworks based on pairwise comparisons. Some applications:

- Preference learning with an order of magnitude fewer questions than classical pairwise comparison-based methods

- Expert knowledge elicitation with outcome that can be used to, for example, speed up downstream optimization tasks

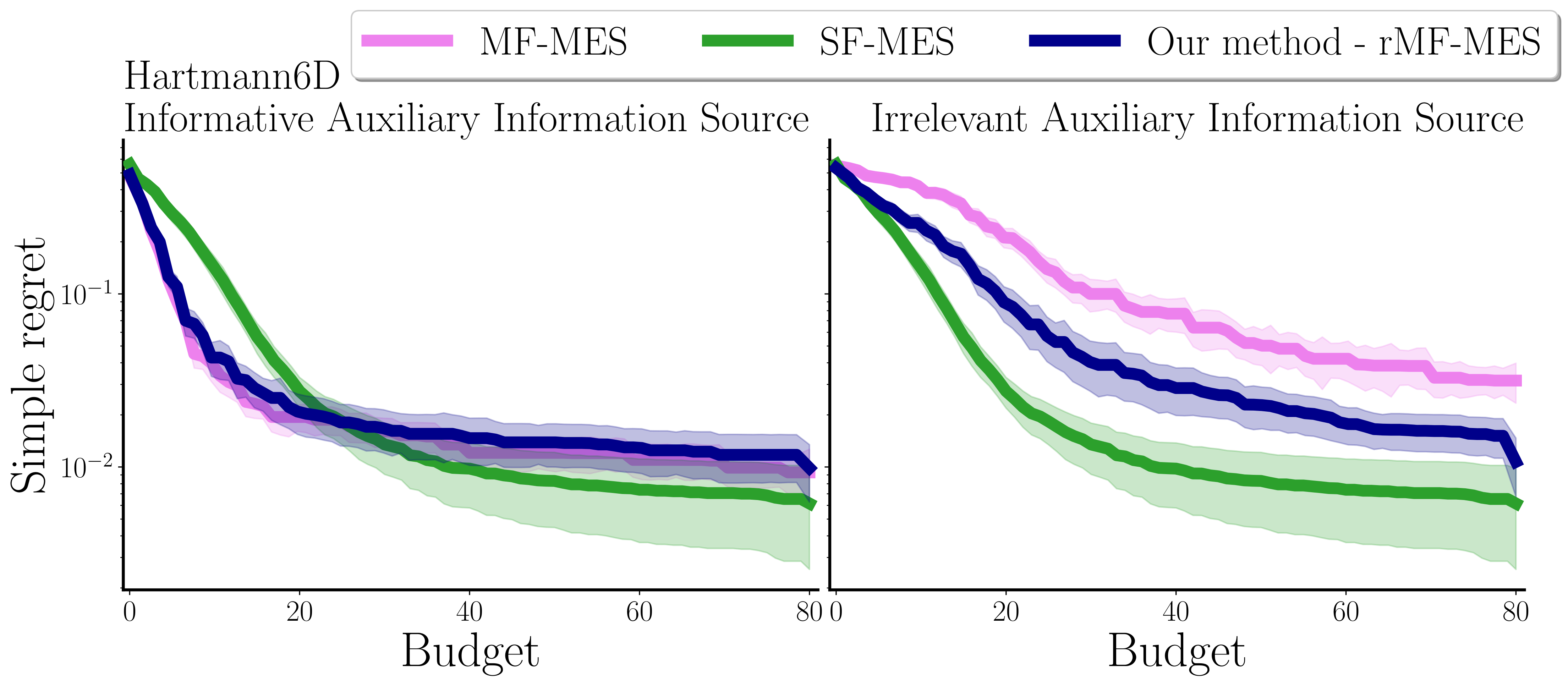

Multi-Fidelity Bayesian Optimization with Unreliable Information Sources

Multi-fidelity Bayesian optimization (MFBO) integrates cheaper, lower-fidelity approximations of the objective function into the optimization process. The project introduces rMFBO (robust MFBO), a methodology to make any MFBO algorithm robust to the addition of unreliable information sources. Some applications:

- Utilizing information sources of uncertain accuracy to accelerate optimization problems

- Incorporating human input as an information source while ensuring it doesn't negatively impact optimization

Easy integration with

Easy integration with